Google announced Gemma 4 on April 2, 2026, positioning it as "byte for byte, the most capable open models" with a focus on advanced reasoning and agentic workflows. The release includes four models spanning from 2.3 billion active parameters up to 31 billion, each targeting a different deployment scenario, and all available on Hugging Face under Apache 2.0. The performance numbers are strong, but the more interesting story is in the specific engineering decisions Google made around memory efficiency, multimodal input handling, and the Mixture-of-Experts design that allows near-frontier quality from 4 billion active parameters.

The Gemma 4 model family

Gemma 4 is not a single model but a family of four, each designed for a different point on the capability-efficiency frontier. The naming convention reflects this: the two smaller models use an "E" prefix (for "efficient"), while the two larger models are identified by their parameter counts.

| Model | Total Params | Active Params | Context | Modalities | License |

|---|---|---|---|---|---|

| E2B | 5.1B | 2.3B | 128K | Text, Image, Video, Audio | Apache 2.0 |

| E4B | 8B | 4.5B | 128K | Text, Image, Video, Audio | Apache 2.0 |

| 26B A4B | 26B (MoE) | 4B | 256K | Text, Image, Video | Apache 2.0 |

| 31B | 31B (Dense) | 31B | 256K | Text, Image, Video | Apache 2.0 |

All four models ship under Apache 2.0, which means no usage restrictions, no registration requirements, and full commercial use. Both base and instruction-tuned (IT) variants are available for each model.

The distinction between total and active parameters matters for understanding both memory requirements and inference costs. The E2B model has 5.1 billion total parameters (including embeddings) but only 2.3 billion are active during any given forward pass. The 26B MoE model takes this further: 26 billion parameters reside in memory, but only 4 billion activate per token, which means inference compute scales with the active count while memory scales with the total.

Architecture: what makes Gemma 4 different

Several architectural decisions in Gemma 4 diverge from the standard transformer recipe, and they have direct implications for how these models behave in production.

Per-Layer Embeddings (PLE)

The most distinctive feature is Per-Layer Embeddings, a mechanism where each decoder layer receives its own conditioning vector derived from the input tokens. In a standard transformer, the embedding layer produces a single representation that flows through all layers unchanged (aside from residual connections). PLE adds a secondary embedding table that provides per-layer residual signals, effectively giving each layer a different "view" of the input tokens.

The practical consequence is better parameter efficiency. Each layer can specialize more aggressively because it receives layer-specific conditioning, which means a 31B model with PLE can extract more capability per parameter than a 31B model without it. The overhead is modest: the secondary embedding table adds parameters but minimal inference latency.

Alternating attention: local and global

Gemma 4 alternates between two attention patterns across its layers. Local layers use sliding-window attention with a window of 512 or 1024 tokens, which keeps memory and compute bounded regardless of sequence length. Global layers use full-context attention with proportional RoPE positional encoding, which enables the model to attend to the entire 128K or 256K context window.

This hybrid approach is what makes 256K context feasible on hardware that could not handle full attention at that length. The local layers handle most of the computation cheaply, while the global layers (which are fewer in number) handle the long-range dependencies that matter for retrieval and reasoning over long documents.

Shared KV cache

In the larger models, the last N decoder layers share their key-value states rather than maintaining independent caches. This reduces the memory footprint of the KV cache and the compute required to fill it, which directly translates to higher throughput at longer context lengths. For production deployments running batch inference with 128K+ contexts, this is a meaningful optimization.

Mixture-of-Experts (26B A4B)

The 26B variant uses a Mixture-of-Experts architecture where each token is routed to a subset of expert networks. With 26 billion total parameters but only 4 billion active per token, the model achieves quality that approaches the 31B dense model while requiring significantly less compute per forward pass.

The important caveat for deployment planning is that MoE models require the full parameter set in memory even though only a fraction activates per token. You need enough VRAM to hold 26 billion parameters, but the inference speed is closer to what you would expect from a 4 billion parameter model. This makes MoE architectures particularly attractive for throughput-sensitive deployments where memory is available but per-token latency or cost is the binding constraint.

GPU memory requirements

Getting GPU sizing right for Gemma 4 requires accounting for model weights, KV cache, and runtime overhead. The weight footprint depends entirely on quantization precision, while the KV cache scales with context length and batch size.

Weight memory by precision

| Model | FP16 | INT8 | INT4 |

|---|---|---|---|

| E2B (5.1B) | 10.2 GB | 5.1 GB | 2.6 GB |

| E4B (8B) | 16 GB | 8 GB | 4 GB |

| 26B A4B (MoE) | 52 GB | 26 GB | 13 GB |

| 31B (Dense) | 62 GB | 31 GB | 15.5 GB |

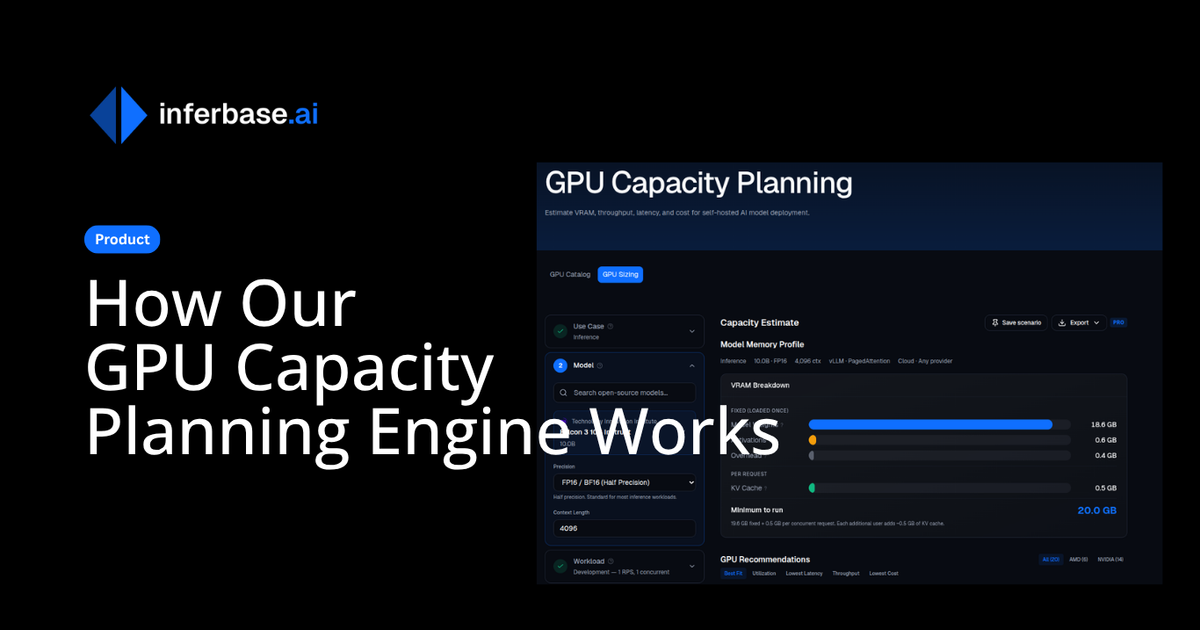

These are weight-only figures. In production, add 20-40% for KV cache, activations, and framework overhead depending on your context length and concurrency. If you need a more precise estimate for your specific deployment, our GPU sizing calculator handles the full memory budget calculation including KV cache and batch size.

Hardware recommendations

| Model | Minimum viable | Recommended production |

|---|---|---|

| E2B | Any modern CPU, mobile SoC | Edge devices, Raspberry Pi 5, mobile |

| E4B | RTX 3060 12GB (INT4) | RTX 4070 Ti, T4, consumer GPU |

| 26B A4B | RTX 4090 24GB (INT4) | A100 40GB, L40S |

| 31B | RTX 4090 24GB (INT4, tight) | A100 80GB, H100 |

The E2B and E4B models are explicitly designed for on-device deployment, and they deliver on that promise. The E2B in INT4 requires roughly 2.6 GB for weights, which leaves headroom for KV cache even on a 4 GB mobile GPU. The E4B in INT4 fits comfortably on a 16 GB consumer GPU with room for moderate context lengths.

The 26B MoE and 31B dense models are more demanding. In FP16, the 31B requires a single A100 80GB or H100. With INT4 quantization, both can fit on an RTX 4090 (24 GB), though KV cache at long context lengths will push memory limits. For production serving with concurrent requests and long contexts, A100 80GB or H100 hardware is the practical minimum for either of the larger models.

For a detailed walkthrough of GPU memory calculations including KV cache sizing and multi-GPU setups, see our GPU sizing guide for LLM inference.

Benchmark performance

The benchmark numbers contextualize where Gemma 4 sits relative to other open-weight and proprietary models. The standout result is the 26B MoE: it comes within a few percentage points of the 31B dense model across nearly every benchmark while activating only 4 billion parameters per token.

Reasoning and knowledge

| Benchmark | 31B | 26B A4B | E4B | E2B |

|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 60.0% |

| AIME 2026 | 89.2% | 88.3% | 42.5% | 37.5% |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 43.4% |

| BigBench Hard | 74.4% | 64.8% | 33.1% | 21.9% |

The 31B model's 89.2% on AIME 2026 is particularly notable for a sub-40B open model. The MoE variant trails by less than 1 percentage point on AIME while using a fraction of the compute per token.

Coding

| Benchmark | 31B | 26B A4B | E4B | E2B |

|---|---|---|---|---|

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% |

| Codeforces Elo | 2150 | 1718 | 940 | 633 |

A Codeforces Elo of 2150 from the 31B model puts it in competitive territory with models that have significantly more parameters. The MoE variant at 1718 demonstrates that the expert routing is effective for code generation tasks, though the gap to the dense model is wider here than on reasoning benchmarks.

Vision and multimodal

| Benchmark | 31B | 26B A4B | E4B | E2B |

|---|---|---|---|---|

| MMMU Pro | 76.9% | 73.8% | 52.6% | 44.2% |

| MATH-Vision | 85.6% | 82.4% | 59.5% | 52.4% |

| OmniDocBench (lower = better) | 0.131 | 0.149 | 0.181 | 0.290 |

All four models support vision input natively, with variable aspect ratio encoding that adjusts the token budget based on image content. The vision encoder uses learned 2D positions with multidimensional RoPE, which handles diverse image sizes without the distortion that comes from forced resizing.

Multimodal capabilities

Gemma 4's multimodal support goes beyond basic image understanding. The models handle text, images, video, and (for the smaller variants) audio through a unified architecture.

| Capability | E2B | E4B | 26B A4B | 31B |

|---|---|---|---|---|

| Text | Yes | Yes | Yes | Yes |

| Images | Yes | Yes | Yes | Yes |

| Video | Yes | Yes | Yes | Yes |

| Audio | Yes | Yes | No | No |

Audio support in the E2B and E4B models uses a USM-style conformer encoder, the same architecture Google used in Gemma 3n. This is significant for on-device applications that need speech understanding without a separate ASR pipeline.

The vision encoder supports configurable token budgets (70, 140, 280, 560, or 1120 tokens per image), which gives deployment teams fine-grained control over the trade-off between visual understanding quality and inference cost. Lower token budgets process images faster and consume less KV cache memory, which matters when processing many images or video frames in a single request.

Notable built-in capabilities include:

- Object detection with native JSON bounding box output

- GUI element detection for UI automation tasks

- Document understanding and OCR

- Video comprehension with multi-frame reasoning

- Function calling with multimodal inputs (e.g., analyzing an image and calling a tool based on what it sees)

- Extended thinking mode with configurable reasoning budgets up to 4,000 tokens

Agentic workflows and tool use

Google's framing of Gemma 4 around "agentic workflows" is not just marketing. The models ship with native function calling that works across modalities, and the extended thinking mode gives them a structured way to reason before acting. This combination is what separates a model that can answer questions from one that can operate as part of a multi-step pipeline.

The function calling implementation follows a tool-use pattern where the model receives a set of tool definitions (as JSON schemas), reasons about which tool to invoke, and returns structured JSON with the function name and arguments. What Gemma 4 adds is the ability to ground tool selection in multimodal context. A model can analyze an image, decide it needs to call a weather API based on a location visible in the photo, and return the structured call, all in a single inference pass.

tools = [{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a location",

"parameters": {

"type": "object",

"properties": {"city": {"type": "string"}},

"required": ["city"],

},

},

}]

inputs = processor.apply_chat_template(

messages,

tools=tools,

tokenize=True,

return_dict=True,

return_tensors="pt",

add_generation_prompt=True,

enable_thinking=True,

)

The enable_thinking=True flag activates extended thinking, which allocates up to 4,000 tokens for internal reasoning before the model produces its response. This is particularly useful for agentic tasks where the model needs to plan a sequence of actions or decide between multiple tools. The thinking tokens are generated but can be hidden from the final output, so the user sees only the result while the model benefits from the intermediate reasoning.

For teams building agent frameworks or multi-step pipelines, the practical implication is that Gemma 4 can serve as the reasoning core of an agent without relying on prompt engineering tricks to simulate tool use. The function calling and thinking capabilities are part of the model's training, not bolted on through system prompts.

Deployment options

Gemma 4 has deployment paths ranging from browser-based WebGPU inference (E2B) to data center serving (31B).

Hugging Face Transformers is the reference implementation:

from transformers import AutoModelForMultimodalLM, AutoProcessor

model = AutoModelForMultimodalLM.from_pretrained(

"google/gemma-4-31b-it",

device_map="auto"

)

processor = AutoProcessor.from_pretrained("google/gemma-4-31b-it")

llama.cpp provides quantized inference for local and server deployments:

llama-server -hf ggml-org/gemma-4-E2B-it-GGUF

MLX targets Apple Silicon with TurboQuant support for roughly 4x memory reduction:

mlx_vlm.generate \

--model google/gemma-4-E4B-it \

--image image.jpg \

--prompt "Describe this image"

Additional deployment paths include ONNX for cross-platform inference, mistral.rs for Rust-native serving, and transformers.js for browser-based inference via WebGPU.

Where each model fits

The four models target fundamentally different deployment scenarios, and choosing between them is less about which is "best" and more about which constraints dominate your use case.

E2B (2.3B active) is the right choice when the model must run on-device: mobile applications, edge inference, embedded systems, or scenarios where data cannot leave the device. Its audio support makes it particularly suitable for voice-enabled applications that need local processing.

E4B (4.5B active) occupies the space between on-device and cloud. It runs on consumer GPUs and handles multimodal tasks with audio support, making it a strong default for applications that have access to a single GPU but need more capability than the E2B provides.

26B A4B (4B active, MoE) is the efficiency play. If you have the memory budget for 26B parameters but need throughput closer to a 4B model, the MoE architecture delivers near-31B quality at a fraction of the per-token compute. This is the model to choose when you are serving high volumes of requests and inference cost per token is your primary concern.

31B (dense) is the quality ceiling. When you need the best possible output from an open-weight model under 40B parameters and have the hardware to support it, the 31B dense model is the straightforward choice. Its 256K context window and strong reasoning benchmarks make it suitable for complex tasks that require extended context and precise outputs.

What this means for the open-source landscape

Gemma 4 continues the trend of open-weight models closing the gap with proprietary offerings, but the MoE variant is where the release gets strategically interesting. A model that achieves 88.3% on AIME 2026 with only 4 billion active parameters per token changes the economics of self-hosted inference in a concrete way: the compute cost per token drops by an order of magnitude while quality remains competitive with models that are 8-10x larger in active parameters.

The Apache 2.0 licensing removes the usage restrictions that complicate deployment of some other open-weight models. There are no registration requirements, no commercial use limitations, and no output attribution requirements, which makes Gemma 4 a viable foundation for production applications without legal overhead.

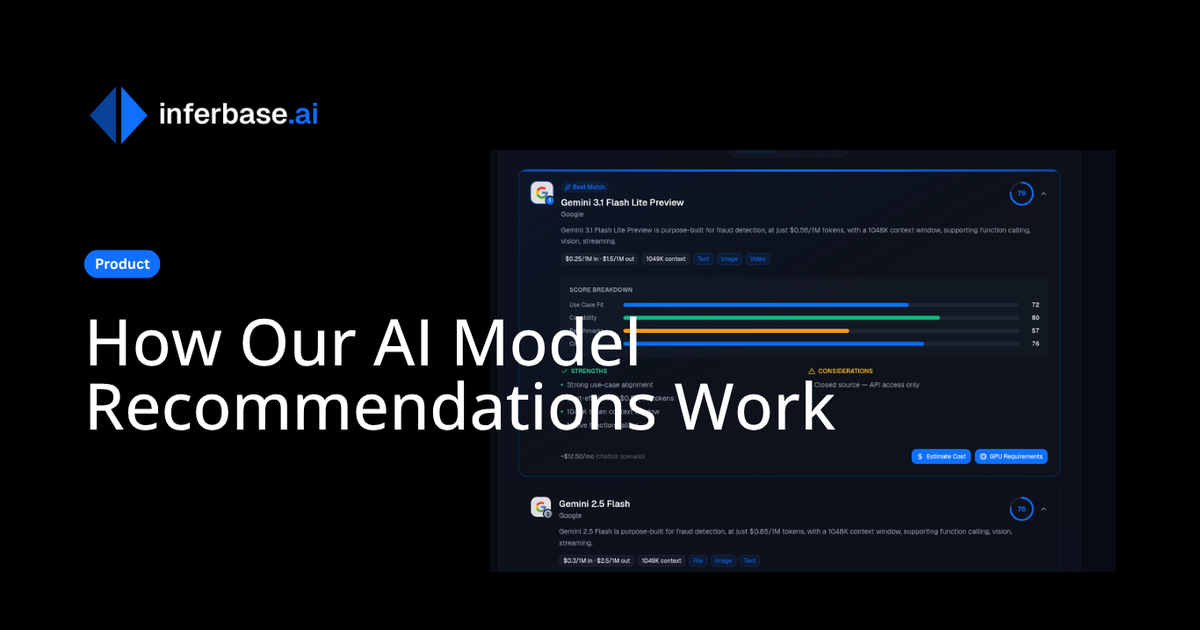

For teams evaluating whether to self-host or use API access, the GPU memory requirements and cost implications of each variant are worth calculating precisely. Our model comparison tool and GPU sizing calculator can help you run those numbers against your specific workload and hardware constraints.